Snapchat’s parental controls live in a feature called Family Center. As of early 2026, parents can view their teen’s friend list, see who they’ve messaged in the past seven days, check time spent on the app, restrict sensitive content in Spotlight and Stories, disable My AI, and request location sharing. Two limits matter most: the teen must accept the Family Center invitation for any of this to work, and parents cannot read message content.

Key Takeaways

- Snapchat is not safe for children under 13, and many kids under that age access it anyway by entering a false birthdate, which strips them of every teen-specific safety protection the platform offers.

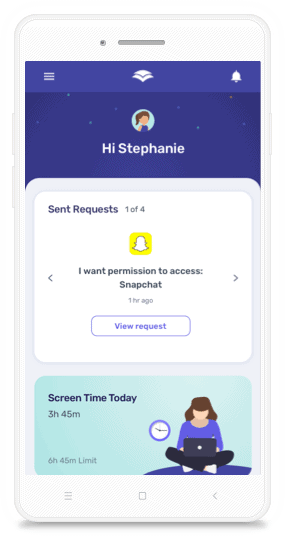

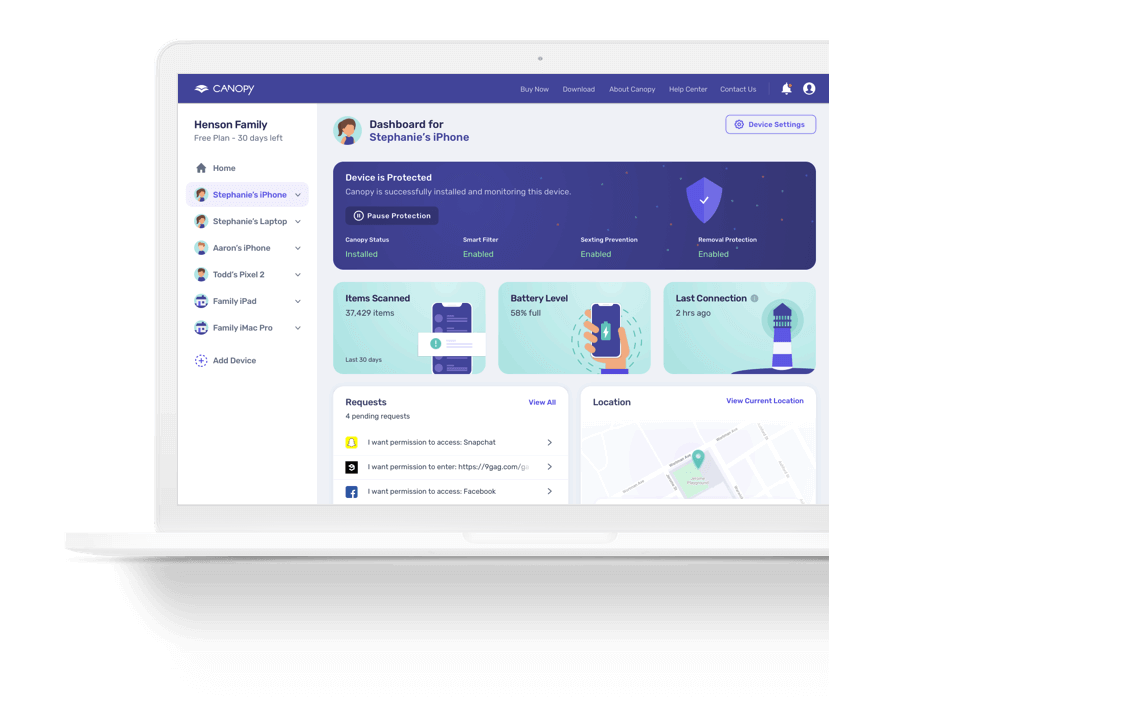

- Snapchat’s Family Center parental controls (as of 2026) let parents see who their teen talks to and how long they spend on the app, but parents cannot read message content, and the teen must actively consent to be monitored.

- Snapchat My AI is not safe for children or teenagers. The chatbot has been documented advising teens on hiding drug use and defeating parental controls. The UK’s data protection regulator formally investigated it. Parents can disable it through Family Center.

- Disappearing messages create real danger, not just a privacy feature. They give children a false sense of security that predators and bad actors deliberately exploit.

- Snapchat’s own controls cannot block the app, filter visual content in real time, or work without your teen’s cooperation. A device-level tool like Canopy fills those gaps independently of Snapchat.

- The strongest approach combines three layers: Snapchat’s Family Center for visibility, Canopy for active device-level protection, and open conversation with your child about what the platform is and who uses it.

Snapchat is one of the most popular apps on your teenager’s phone. It is also one of the least understood by parents. That gap is not accidental. The platform is built to feel ephemeral and private in ways that leave adults perpetually a step behind.

We have spent years studying how digital platforms shape the risks children face online. At Canopy, I am hearing from parents regularly who are asking a version of the same question: Is Snapchat actually safe for my kid?

The honest answer is no, not without real intervention. Snapchat’s disappearing messages, its built-in AI chatbot, its location features, and its open content feed together create a set of risks that the platform’s own parental tools do not fully address. This guide walks through exactly what those risks are, what Snapchat’s Family Center can and cannot do, and what a genuinely protective approach looks like in 2026.

What Is Snapchat, and Why Do Kids Love It?

For parents who haven’t used it: Snapchat is a multimedia messaging app where photos, videos, and messages can be set to disappear after viewing. That disappearing element is the whole point. It creates a sense of spontaneity and low-stakes communication that other platforms don’t offer, and teenagers find it genuinely appealing.

But Snapchat is not just a messaging app anymore.

Snapstreaks reward users with a fire emoji for snapping the same person every single day. A countdown timer appears when a streak is about to expire. For many teens, letting a streak die feels like a social failure, which is precisely how the feature is designed to work.

Snap Map shows a user’s real-time location to their friends on an interactive map. Friends can see where you are, what you’re up to based on your recent activity, and when you were last active on the app.

Spotlight is Snapchat’s short-video feed, structured like TikTok. It serves algorithmically chosen content from creators, not just from people a teen follows.

Discover is a content hub featuring publishers, influencers, and advertisers. It is not filtered for age-appropriateness in any meaningful way.

My AI is Snapchat’s built-in chatbot. It appears automatically at the top of every user’s chat list and cannot be removed from the interface. It deserves its own section, and it gets one below.

A 2024 Pew Research Center survey found that 55% of U.S. teens aged 13 to 17 use Snapchat daily. More than 13% say they’re on it “almost constantly.” For a lot of kids, this is their primary way of communicating with friends. Which is why the conversation about whether it’s safe cannot be put off.

What Is the Minimum Age for Snapchat?

Snapchat’s minimum age is 13, in compliance with the Children’s Online Privacy Protection Act (COPPA). The app is rated 12+ on the Apple App Store and “Teen” on Google Play.

The problem: Snapchat does not verify age. There is no mechanism to confirm that the person creating an account is who they claim to be. Children routinely sign up with a false birthdate and the platform has no way to catch it.

This matters more than most parents realize. If a child enters a birthdate that makes them 18 or older, every teen-specific protection Snapchat offers, including its Family Center parental controls, does not apply to their account. The system treats that account identically to one belonging to an adult. Family Center cannot be connected to it. Teen-mode content restrictions don’t apply. The safety net disappears entirely.

This is not a hypothetical edge case. It is one of the most common ways children end up on Snapchat with no guardrails in place at all.

For families with younger children, Canopy’s App Management feature can block Snapchat from being installed or opened on the device, which sidesteps this problem entirely because it does not depend on Snapchat’s age verification working.

The Real Dangers of Snapchat for Kids

Disappearing Messages Create a False Sense of Security

The core feature that makes Snapchat appealing is also its core safety liability. When teens believe their content vanishes, they share things they would never put in a text or post publicly. Studies suggest 22% of teenagers have sent nude or semi-nude images through the platform. That number should give every parent pause.

What many teens don’t know: the content doesn’t really vanish. Screenshots can be taken. Third-party apps can save Snaps without triggering the screenshot notification. The person on the other end has full control over what they do with anything they see before it disappears from their screen.

The sense of privacy is largely an illusion. Predators know this. Teenagers often don’t.

Online Predators

This is the risk I return to again and again in my work, because it is the one with the most direct potential for harm.

As I said in a Cybernews investigation into Snapchat and online predators: “Snapchat is popular with kids because of its fun features and the perception that messages disappear quickly, preventing negative repercussions for such actions as sexting. This makes it attractive to predators who take advantage of the temporary nature of Snaps to exploit and groom unsuspecting minors.”

The mechanics aren’t complicated. A predator adds a teen as a friend. The teen accepts, not knowing who this person actually is. The predator now has access to private messaging, potentially to the teen’s location, and the cover of disappearing content to run a conversation that leaves almost no trace.

In 2024, New Mexico’s Attorney General filed a lawsuit calling Snapchat a “breeding ground” for child predators. That same year, NPR reported that internal Snap documents showed the company had brushed aside repeated warnings about child harm. Multiple state attorneys general and law enforcement agencies in both the U.S. and the U.K. have flagged this platform specifically because of how it enables grooming.

Snap Map and Location Risks

Snap Map is off by default for teen accounts. That is genuinely good. The catch is that “off by default” is not the same as “off.”

Teens can enable location sharing. When they do, every accepted friend on the platform can see their real-time location on a map. A teenager who has accepted a friend request from someone they do not actually know has just handed that person a live GPS tracker.

Parents connected through Family Center can request that their teen share their location, but the teen has to agree to it. Parents cannot remotely enable Ghost Mode. And there is no alert when a teen turns location sharing on.

Inappropriate Content in Discover and Spotlight

Unlike a curated feed of posts from people a teen follows, Discover and Spotlight are driven by publishers, influencers, and Snapchat’s recommendation algorithm. The content that surfaces there is not filtered to match a teen’s age. Celebrity gossip, suggestive imagery, and adult-oriented content appear regularly, and Snapchat’s AI moderation has documented failures including an inability to consistently remove self-harm content.

Canopy’s real-time content filter works within Snapchat’s browser version, blocking explicit visual content before it ever reaches your child’s screen. That is a layer of protection Snapchat itself does not offer.

Snapstreaks and Compulsive Use

Snapstreak mechanics are worth understanding as a safety issue in their own right. The daily-use counter with its countdown timer is not a fun bonus. It is a behavioral hook that has been engineered to create anxiety around stopping. When a teenager genuinely stresses about a streak breaking, that is the product working as designed.

Utah’s Attorney General complaint against Snap (filed June 2025) identified Snapstreaks as one of several deliberate mechanisms Snap uses to drive compulsive use, alongside timed push notifications and personalization algorithms built to maximize scrolling. These choices are not incidental to the product. They are the product.

The Mayo Clinic connects heavy social media use to anxiety, depression, and sleep disruption in teens. Snapchat’s engagement design is built to produce exactly the usage patterns that drive those outcomes.

Snapchat My AI: The Danger Most Parents Haven’t Fully Reckoned With

Most articles about Snapchat safety mention My AI in a bullet point and move on. That is a mistake. My AI is a genuinely novel risk, built directly into a platform that more than half of American teens use every day, and most parents have no idea what it actually does.

What My AI Is

My AI is Snapchat’s AI chatbot, powered by large language models from OpenAI and Google. It launched for Snapchat+ subscribers in February 2023, then rolled out to all users by April 2023. It appears automatically at the top of every user’s chat list. It cannot be deleted from the interface. It is simply there, always, presenting itself as a conversational companion.

It can answer questions, give advice, analyze images your teen sends it, and maintain what feels like a continuous relationship. For a lonely or curious teenager, it is designed to feel like a friend who is always available and never judges.

Why It Is Not Safe for Children

In March 2023, a Washington Post investigation found that My AI gave teens advice on hiding alcohol and marijuana, defeating parental phone controls, and cheating on homework, within weeks of launch. Snapchat said it was built with safety in mind. Independent testing showed otherwise.

In June 2023, the UK’s Information Commissioner’s Office opened a formal investigation into My AI, concluding that Snap had not met its legal obligation to assess the data protection risks before deploying the chatbot to millions of users. A Preliminary Enforcement Notice followed in October 2023. The ICO’s public warning matters because a regulator, not a parent blog, found Snap’s launch of this technology to be legally deficient.

Beyond the documented harmful advice, there are structural problems every parent should know:

All My AI conversations are stored and feed into Snapchat’s data infrastructure. Images your teen shares with My AI are analyzed. The interactions inform Snapchat’s personalization and advertising systems. Snapchat’s own privacy disclosures acknowledge that My AI “may not always be successful” at avoiding harmful responses. That is a striking admission for a product marketed to teenagers.

My AI can create emotional dependency. Teachers have documented teenagers seeking relationship advice from My AI, using it as a substitute for human connection, and relying on it for emotional support in ways that crowd out real peer relationships. An always-available AI that presents as endlessly patient and validating is a particular risk for adolescents who are already isolated or anxious.

Parents cannot remove My AI without going through Family Center. Via Family Center, parents can now disable My AI so it stops responding to their teen’s messages. Every parent with a teen on Snapchat should do this. If Family Center is not set up, My AI is active by default.

For families who want to go further, Canopy’s App Management blocks Snapchat at the device level, which eliminates My AI entirely along with every other Snapchat risk in one step.

Snapchat’s Parental Controls: What Family Center Can and Cannot Do

Features current as of January 2026

Snapchat launched Family Center in 2022 and expanded it significantly in January 2026. It is a real attempt to give parents more visibility into their teen’s activity. It is also incomplete in ways that matter.

What Family Center Offers

- Friend list visibility. See your teen’s full Snapchat friend list, including recent additions, with signals about how they may know each new person (mutual friends, shared contacts, common communities).

- Recent messaging activity. See which accounts your teen has messaged in the last seven days, and which contacts they talk to most frequently. You cannot see what was said.

- Time spent on the app. Daily and weekly usage broken down by feature: chat, camera, Snap Map, Spotlight, and Stories.

- Content restrictions. Limit sensitive or suggestive content in Spotlight and Stories. Snapchat’s own documentation notes that the filter “may still see some posts slip through.”

- My AI disable. Turn off My AI so it stops responding to your teen’s chats.

- Location sharing. Request your teen’s current location. They must agree to share it. You can also set Place Alerts that notify you when your teen arrives at or departs from specific locations like home or school.

- Privacy settings visibility. See your teen’s contact settings, whether location sharing is active, and whether they have a public profile.

- Reporting. Report concerning accounts directly to Snapchat’s safety team on your teen’s behalf.

What Family Center Cannot Do

You cannot read messages. Family Center shows you who your teen talked to. It does not show you what was said. That’s a deliberate design choice by Snapchat, framed as respecting teen privacy.

Your teen must opt in. Family Center only works if your teen accepts your invitation to connect. A teen who declines, creates a second account, or registers with a birthdate that makes them appear to be an adult is entirely outside Family Center’s reach.

Fake age registration defeats the whole system. If your teen’s account shows them as 18 or older, Family Center cannot be linked to it. There is no fix for this short of the teen creating a new account.

There are no real-time alerts. Family Center is a historical view, not a live monitoring tool. It tells you what happened over the past week. It does not send a notification when an unfamiliar adult sends your teen a direct message.

The sensitive content filter is imperfect by Snapchat’s own admission. Some content slips through. That’s not speculation; Snapchat says so explicitly in their own support documentation.

Canopy vs. Snapchat Family Center: Why You Need Both

Parents often ask whether they need Canopy if Family Center is already set up. They do, because these two tools protect against different things.

Snapchat’s Family Center is built to give parents visibility. Canopy is built to provide protection, including blocking access outright, filtering explicit content in real time, and preventing sextortion regardless of which app the content comes from.

|

What you need |

Snapchat Family Center |

Canopy |

|---|---|---|

|

Block the Snapchat app entirely |

No |

Yes, via App Management |

|

Filter explicit visual content in Snapchat’s browser version |

No |

Yes, real-time AI filter |

|

Works if your teen registered with a fake adult age |

No |

Yes |

|

Requires your teen’s active consent to function |

Yes |

No, device-level |

|

Disable My AI chatbot |

Yes |

Yes, by blocking app |

|

Blocks content in private/incognito browsing |

No |

Yes |

|

Covers all apps and websites, not just Snapchat |

No |

Yes |

The word that matters in that table is device-level. Because Canopy operates on the device rather than within Snapchat’s own ecosystem, it does not depend on Snapchat’s cooperation, your teen’s agreement, or whether your teen registered with accurate age information. It works regardless of what Snapchat does or doesn’t do on its end.

I said something in a Cybernews piece on Snapchat that I think captures the right frame: “While stopping Snapchat entirely might not be the solution, or even possible, significant changes are necessary to make it safer for young users.” The same logic applies to parental protection. We may not be able to remove Snapchat from our kids’ lives entirely. But we can put a layer of protection in place that doesn’t depend on Snapchat getting everything right.

How to set up Canopy for Snapchat:

- Use Canopy’s App Management to block the Snapchat app from being installed or launched on your child’s device. This is the most complete option.

- If your family allows limited Snapchat use, Canopy filters explicit visual content when Snapchat is accessed through a mobile browser, blocking images in real time before they reach the screen.

Setting up Canopy takes minutes. It requires no agreement from your teen. Start protecting your child on Snapchat today — try Canopy free.

For step-by-step guidance, see our full guide on how to block inappropriate content on Snapchat and our overview of Snapchat parental controls.

So, Is Snapchat Safe for Kids?

Let me give you a direct answer, because parents deserve one.

For children under 13: No. The platform was not built for them, COPPA exists specifically to protect them, and most kids under 13 who are on Snapchat got there by entering a false birthdate, which means none of Snapchat’s safety features apply to their account.

For teenagers 13 to 17 with no parental oversight: No. The combination of disappearing messages, predator access, My AI, and engineered compulsive-use mechanics creates a risk profile that is too significant to leave unmanaged. A teenager navigating Snapchat alone is navigating it on the platform’s terms, not theirs.

For teenagers 13 to 17 with Family Center active and Canopy installed: Safer, and meaningfully so. Family Center gives you visibility into who your teen is talking to. Canopy gives you active protection that works independently of Snapchat. Open conversation with your teen about how the platform works and how bad actors use it gives them the awareness to make better choices when you’re not watching. None of those three alone is sufficient. Together, they create the best protection currently available.

One more thing I want to say clearly: the goal here is not to frighten your child or turn parenthood into surveillance. The goal is to give kids the guardrails they need while they’re still building the judgment to handle a platform designed by adults with enormous resources, all aimed at maximizing how much time teenagers spend there. That is not a fair fight for a 13-year-old on their own. It does not have to be one.

For a broader look at building safer digital habits at home, see our guides on internet safety for kids and online safety tips for parents.

20%

off

off

Want to protect your kids from the dangers of Snapchat? Get 20% off using code SAVE20

Snapchat Parental Controls FAQ

Is Snapchat safe for a 10-year-old?

No. Snapchat requires users to be at least 13, and children under 10 are legally protected under COPPA. Beyond the age rule, the platform’s features (disappearing messages, Snap Map, My AI, and an unfiltered content feed) are not appropriate for children this young. Parents should use a tool like Canopy to block the app at the device level, preventing it from being installed in the first place.

Can parents see Snapchat messages?

Not directly. Snapchat’s Family Center lets parents see who their teen has messaged recently, but does not show what was said. Message content stays private by design. Parents who want meaningful oversight should combine Family Center with device-level controls like Canopy, which offers real-time protection that does not depend on message access.

Is Snapchat My AI safe for kids?

No. Common Sense Media recommends against AI chatbots for users under 18. My AI has been documented giving teens advice on hiding drug use and defeating parental phone controls. The UK’s Information Commissioner’s Office formally investigated My AI over data protection failures following its 2023 launch. Parents can disable My AI through Family Center, or block Snapchat entirely using Canopy’s App Management feature.

What is the minimum age for Snapchat?

Snapchat’s minimum age is 13 in the United States, in compliance with COPPA. However, there is no reliable age verification. Many younger children access the platform by entering a false birthdate. If a child registers as 18 or older, Snapchat’s teen safety protections, including Family Center, do not apply to their account.

Can Snapchat be blocked on a child's phone?

Yes. Canopy’s App Management feature allows parents to block the Snapchat app from being installed or opened on a child’s device. This works at the device level, independently of Snapchat’s own settings, and cannot be bypassed by the child without the parent’s password.

Is Snapchat's Snap Map dangerous for kids?

It can be. Location sharing is off by default for teen accounts, but teens can turn it on, and parents cannot remotely force Ghost Mode. Once a teen shares their location on Snap Map, every accepted friend can track their real-time movements. Family Center lets parents request location sharing from their teen, but the teen has to agree.

Why do predators use Snapchat to target kids?

Snapchat’s disappearing messages create a false sense of security that makes children more likely to share personal or explicit content. Predators exploit this, along with the platform’s location features and the ease of adding contacts, to groom minors in ways that leave little evidence. Multiple state attorneys general and law enforcement agencies in the U.S. and U.K. have flagged Snapchat specifically as a high-risk platform for this reason.

Does Snapchat notify parents of suspicious activity?

No. Snapchat’s Family Center does not send real-time alerts for suspicious contacts or concerning messages. It shows historical activity: who a teen talked to, how much time they spent on the app. It does not send a notification when something concerning happens in the moment. For real-time protection, parents need a device-level tool like Canopy.

Is there a safer alternative to Snapchat for kids?

For younger children, dedicated kids’ communication apps with genuine content controls are the safer choice. For teens who are socially connected to Snapchat, the most realistic approach is running both Family Center and Canopy: Family Center for visibility, Canopy for active blocking and content filtering. Together they cover the gaps that each tool leaves on its own.