It depends on the subject and the state. For anyone under 18, an increasing number of states treat AI-generated explicit imagery the same as real child sexual abuse material, meaning that creating, possessing, or sharing it is a criminal offense. Massachusetts signed such a law in 2024. For adults, nonconsensual deepfake laws exist in some states but coverage is inconsistent. Laws in this area are changing quickly.

Key Takeaways

- AI tools kids use for homework, including ChatGPT, Grok, and Gemini, can generate explicit images and text on demand.

- A RAND survey found that 1 in 5 U.S. high schools and middle schools have dealt with a deepfake bullying incident in the past two school years.

- Standard URL-based content filters miss AI-generated explicit content because no adult-site domain is involved. Visual filtering is the approach that works.

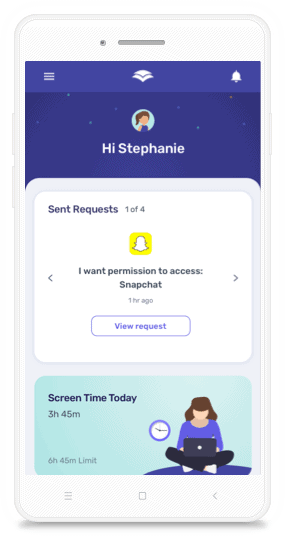

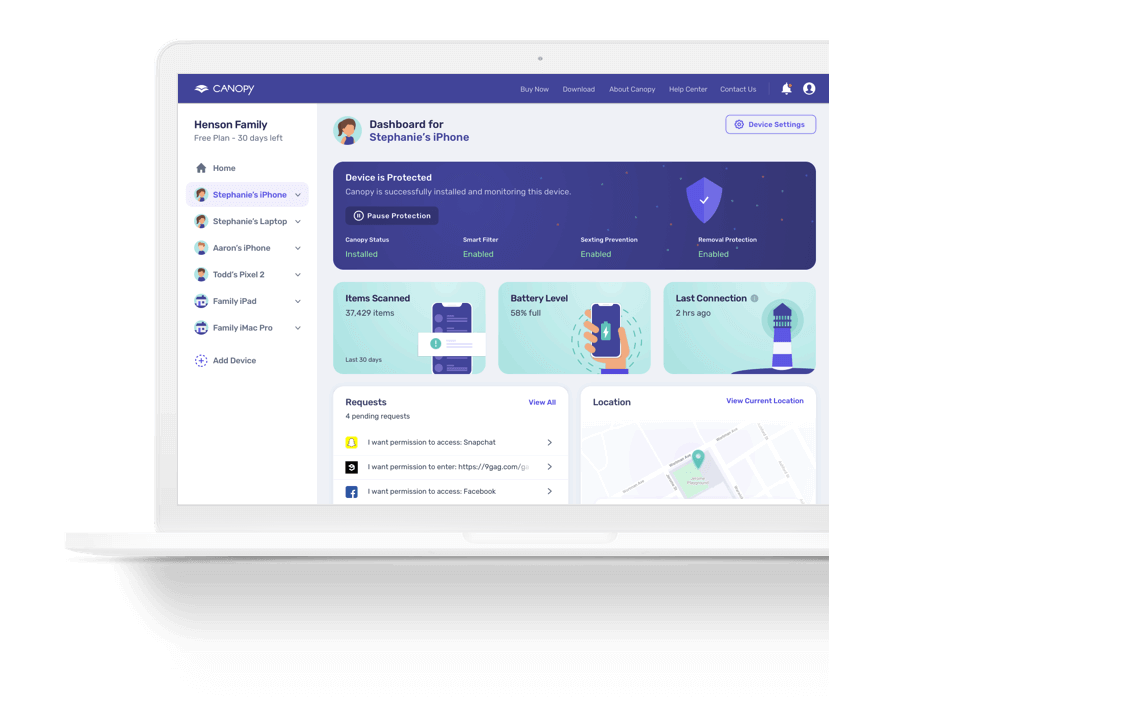

- Canopy’s Smart Filter blocks AI-generated explicit images the same way it blocks real ones, including explicit anime and hentai.

- Canopy’s Chatbot Content Filter covers ChatGPT, Grok, Gemini, Perplexity, and Claude at the device level, not the account level.

- Parents who want to restrict access to AI tools entirely can do that through Canopy’s AI content category, which covers all major AI apps and domains in one setting.

In November 2023, administrators at Lancaster Country Day School in Pennsylvania learned that two male students had used AI to generate more than 300 explicit nude images of their female classmates. It’s one of the most documented cases of AI-generated explicit content circulating inside a school. More than 50 girls were targeted. The school did not contact law enforcement for six months.

That case drew national coverage, but it isn’t isolated. A RAND survey of K–12 principals conducted in October 2024 found that 1 in 5 high schools and middle schools had dealt with a deepfake bullying incident in the previous two school years. The tools involved cost as little as $4.99. The images take seconds to generate.

According to Common Sense Media, 73% of teenagers have encountered pornography online by age 17, and more than half first encountered it before turning 13. The effects on teenagers are well-documented. What has changed is the source: explicit content can now be generated on demand, inside tools kids already use for schoolwork, and it can feature the faces of real people they know.

The forms AI-generated explicit content takes

Several distinct types are worth understanding separately, because they reach kids through different routes.

The most discussed is the deepfake: an explicit image made by placing a real person’s face onto a generated body. For teenagers, this usually means a classmate’s face taken from a social media photo or school picture. Francesca Mani’s case brought the issue to public attention when she was in 10th grade and discovered that boys in her class had used AI software to fabricate explicit images of her and other female classmates. Her case is the most widely covered, but the pattern it represents has shown up in schools across the country. In Massachusetts, Governor Healey signed legislation in 2024 making it a criminal offense to create, possess, or share these materials involving anyone under 18, whether the image is real or AI-generated. Most states have no equivalent law yet.

Beyond deepfakes, there are AI image generators that produce explicit content entirely from text prompts. Most major image generators have content policies. Many are easily bypassed, and some tools have no restrictions at all. A teenager who opens an AI image tool to generate artwork has access, in the same session, to something that produces explicit imagery on request.

AI chatbots are a separate route. ChatGPT, Grok, and Gemini are built with content guardrails, but those guardrails vary in strength and can often be worked around through specific prompting. Explicit text content, and in some cases images, can come from the same tools teenagers use daily for homework help.

Then there’s AI-generated anime and hentai. Explicit animated content is sometimes treated as less serious than photorealistic imagery. It isn’t. AI tools generate explicit anime content at scale, it’s widely accessible, and teenagers encounter it regularly.

Why standard filters miss AI-generated explicit content

Most content filters work by maintaining a blocklist of known adult domains and URLs. When a device tries to connect to one of those addresses, the filter steps in.

That approach doesn’t help when explicit content is generated through a mainstream AI tool. The request goes to chatgpt.com or gemini.google.com. Neither domain appears on any blocklist, because neither is an adult site. The explicit content is produced inside a tool that was otherwise unrestricted. URL filtering was built for a different era of the internet.

What works instead is filtering at the content level, not the address level. A filter that evaluates imagery visually, in real time, doesn’t care where the content came from. A synthetically generated explicit image and a photographed one look the same to a visual filter, which is exactly why visual filtering is the relevant approach here.

What Canopy does about it

Canopy’s Smart Filter evaluates content visually in real time. Because it works at the image level rather than the URL level, it treats AI-generated explicit content the same way it treats photographed explicit content. A synthetically generated image triggers the same filter. This applies to photorealistic imagery and to explicit anime and hentai, both of which Canopy filters.

“The challenge with AI-generated explicit content is that it doesn’t come from adult sites. It comes from the same tools kids open for homework. URL-based filtering wasn’t built for this. Visual filtering is.”

— Yaron Litwin, digital safety expert at Canopy

For AI chatbots specifically, Canopy has a dedicated Chatbot Content Filter covering the major tools in active use: ChatGPT, Grok, Google Gemini, Perplexity, and Claude. When a conversation moves toward explicit content, Canopy intervenes before that content is returned. The filtering is at the browser and device level, not the account level. Switching accounts or opening a new tab doesn’t change anything. For the native chatbot apps, Canopy blocks the app and routes users to the browser version, where filtering is active.

If you’d rather restrict access to AI tools entirely, Canopy’s AI content category lets you block or allow the full set of major AI apps and websites in one place.

For a broader look at where chatbots create risk for kids, see our guide to AI chatbot safety.

Canopy runs on iPhone, Android, iPad, Mac, Windows, and Chromebook. If it’s installed on a device, the protection is on. And it’s included in every Canopy plan: nothing to upgrade, nothing to turn on.

Worth saying plainly: filtering reduces risk, it doesn’t eliminate it. Canopy works best alongside parental involvement and direct conversation with your kids.

How to talk to your kids about this

The conversation most families need to have isn’t a general “avoid explicit content” talk. It needs to be more specific.

Kids should know that explicit images can be created of anyone using photos that are already public, including photos from their own accounts. They should know what to do if they see an image like that of someone they know: don’t share it, tell a trusted adult immediately, and understand that creating or distributing these images of another person carries real consequences. In many states, that includes criminal charges even for minors. The five middle school students expelled by Beverly Hills Unified School District in 2024 for creating and sharing explicit deepfakes of their female classmates were a clear example of how seriously schools and law enforcement are treating these incidents.

For younger kids, the conversation can stay simple: AI tools can show things that aren’t appropriate, just like some websites can, and the same rules apply.

This guide on talking to your child about inappropriate pictures has more on framing these conversations by age.

AI-Generated Explicit Content FAQ

Can AI tools actually generate explicit images?

Yes. Several major AI image generators can produce explicit imagery through prompting, either because they have no content restrictions or because their restrictions are straightforward to bypass. Dedicated tools designed specifically to generate explicit images from uploaded photos are also widely available, some for as little as $4.99.

Does Canopy block AI-generated explicit content?

Yes. Canopy’s Smart Filter evaluates imagery visually in real time, which means AI-generated explicit images are filtered the same way photographed explicit images are. It also covers explicit anime and hentai. Canopy’s Chatbot Content Filter extends that protection to AI chatbots including ChatGPT, Grok, Gemini, Perplexity, and Claude. If you’re looking for a broader comparison of content filtering tools, see our guide to the best porn blockers.

What is a deepfake and how does it affect kids?

A deepfake is a synthetic image or video that places a real person’s likeness into generated content. For teenagers, this most commonly means explicit images created using a classmate’s face from a photo posted online. The emotional and psychological harm to victims can be severe. A RAND survey found that 1 in 5 high school and middle school principals reported a deepfake incident at their school in the 2023–2024 or 2024–2025 school year.