Meta has announced it will appeal both verdicts. The New Mexico penalty of $375 million is based on $5,000 per violation, the maximum under state law. A second trial phase in May could add more penalties and require specific platform changes. The California case, while smaller in dollar terms, could open the door to far larger liability across thousands of pending lawsuits.

Key Takeaways

- A New Mexico jury ordered Meta to pay $375 million on March 24 for misleading users and failing to protect children from predators on Instagram, Facebook, and WhatsApp.

- A California jury found Meta and YouTube negligent on March 25, awarding $6 million in a bellwether case tied to roughly 2,000 similar lawsuits.

- Both verdicts are firsts: the first state jury win over child safety, and the first jury finding that social media companies were negligent in how they designed their platforms.

- Internal Meta documents showed employees warned about child exploitation risks and discussed how encryption would limit the reporting of abuse material to law enforcement.

- Meta will appeal both verdicts. A second New Mexico trial phase in May could mandate platform changes, and a federal bellwether trial begins in Oakland this summer.

- Parents don’t need to wait. Device-level content filters like Canopy protect children across every app and browser right now.

On March 24, 2026, a New Mexico jury ordered Meta to pay $375 million for misleading users about the safety of Instagram, Facebook, and WhatsApp while children were being targeted by sexual predators on those platforms. The next day, a California jury found Meta and YouTube negligent in how they designed their platforms, awarding $6 million in damages in a case tied to roughly 2,000 similar lawsuits.

Some experts are already calling last week Big Tech’s “Big Tobacco moment.” Meta says it will appeal both verdicts.

If your kids use Instagram, Facebook, WhatsApp, or YouTube, here is what these cases actually revealed, why the platforms probably won’t change anytime soon, and what you can do about it today.

The New Mexico Verdict: $375 Million

After a nearly seven-week trial in Santa Fe, jurors sided with New Mexico Attorney General Raúl Torrez on both claims brought under the state’s Unfair Practices Act. They found that Meta willfully misled users about the safety of its platforms, and that Meta engaged in what the jury called “unconscionable” business practices that took advantage of children’s inexperience.

The jury identified roughly 75,000 individual violations and applied the maximum penalty of $5,000 each, totaling $375 million. Even so, that was less than a fifth of the roughly $2.1 billion prosecutors had asked for.

This case started with a sting operation, not a policy paper. In 2023, investigators in the AG’s office set up fake accounts on Facebook and Instagram posing as children under 14. Those accounts were quickly flooded with sexually explicit material and contacted by adults looking for sexual content. The operation led to at least three arrests.

The state then sued Meta, alleging the company had created what prosecutors described as a “breeding ground” for predators.

What Came Out at Trial

The New Mexico proceeding forced a lot of Meta’s internal communications into the open. Three things stood out.

Meta’s own employees raised alarms that went unheeded. Staff and outside child safety experts repeatedly warned the company about dangers on its platforms. Prosecutors argued those warnings were ignored.

Internal messages also showed employees discussing how CEO Mark Zuckerberg’s 2019 decision to add default end-to-end encryption to Facebook Messenger would affect the company’s ability to detect and report child sexual abuse material (CSAM). At the time, Meta was sending roughly 7.5 million such reports per year to law enforcement.

Separately, Meta has announced that end-to-end encrypted messaging on Instagram will no longer be supported after May 8, 2026. The company cited low adoption as the reason, though the timing coincides with growing legal and regulatory pressure around encryption and child safety. WhatsApp and Messenger will keep encryption.

The jury also examined evidence that Meta failed to enforce its own minimum age of 13 for Instagram and Facebook, and that Meta’s algorithms pushed harmful content toward younger users. Jurors weighed whether statements by Zuckerberg, Instagram head Adam Mosseri, and global safety chief Antigone Davis had misled users about how safe the platforms actually were.

The California Verdict: $6 Million, and a Much Bigger Threat to Meta

One day after the New Mexico ruling, a jury in Los Angeles found Meta and Google’s YouTube negligent in the design of their platforms and ruled that both companies failed to adequately warn users of the dangers. The case centered on a 20-year-old woman, identified as K.G.M., who said she began using YouTube at age 6 and Instagram at age 9. She testified that the apps fueled depression, anxiety, and body dysmorphia.

The jury awarded $3 million in compensatory damages and $3 million in punitive damages, with Meta responsible for $4.2 million (70%) and Google for $1.8 million (30%). The dollar amounts are small for companies worth trillions. The legal precedent is not.

This was a bellwether case, meaning its outcome is expected to influence roughly 2,000 similar lawsuits by families and school districts. A federal bellwether trial in Oakland, drawn from a separate pool of consolidated cases, is set to begin in June 2026. TikTok and Snapchat, originally co-defendants in the California case, settled before the trial started.

New Mexico AG Torrez connected the two results: “Juries in New Mexico and California have recognized that Meta’s public deception and design features are putting children in harm’s way.”

What Happens Next (Don’t Hold Your Breath)

Neither verdict forces Meta to change its platforms today. Meta has announced it will appeal both decisions.

In New Mexico, a bench trial set for May 4 will decide whether Meta created a public nuisance and whether a judge can order specific changes, including age verification, predator removal, and protections for minors in encrypted messaging. In California, the bellwether verdict sets a template for thousands of pending cases, but each one will still need to be resolved.

On top of that, over 40 state attorneys general have their own lawsuits against Meta, and a federal bellwether trial is on the calendar for this summer. The legal pressure on Meta is real and growing. But if you are waiting for it to trickle down into safer apps on your child’s phone, you could be waiting a long time.

Why This Matters to Your Family

The uncomfortable takeaway from both verdicts is that Meta’s own safety systems were not enough. In New Mexico, undercover investigators posing as 13-year-olds were contacted by predators within days. In California, a jury found that Meta and YouTube were negligent in how their apps were designed and that they failed to warn users about the risks to young people.

Meta’s lawyers acknowledged during the New Mexico trial that some harmful content and bad actors get through the company’s safety filters. Executives used the phrase “problematic use” to describe how some young users engage with their apps, while stopping short of accepting the idea that social media can be addictive. That is a telling admission.

“What we saw last week is a jury confirming what parents have been feeling in their gut for years: the platforms your kids use every day were not built with their safety as the priority,” said Yaron Litwin, Chief Marketing Officer at Canopy. “These verdicts should settle the question of whether the problem is real. Now the question is what you do about it.”

Four Things You Can Do Right Now

1. Check what’s actually on your child’s phone. Open the device and look at what’s installed. Do they have Instagram, Facebook, WhatsApp, or YouTube? Are those accounts locked down with the strictest available privacy settings? Remember that Meta’s age floor of 13 is poorly enforced. The New Mexico case made that explicit.

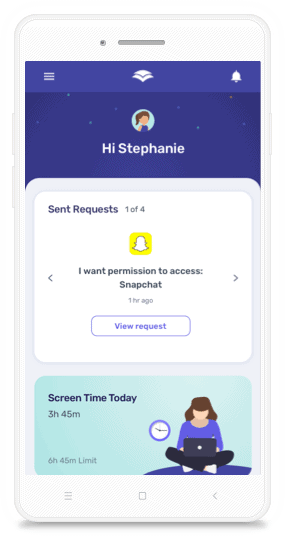

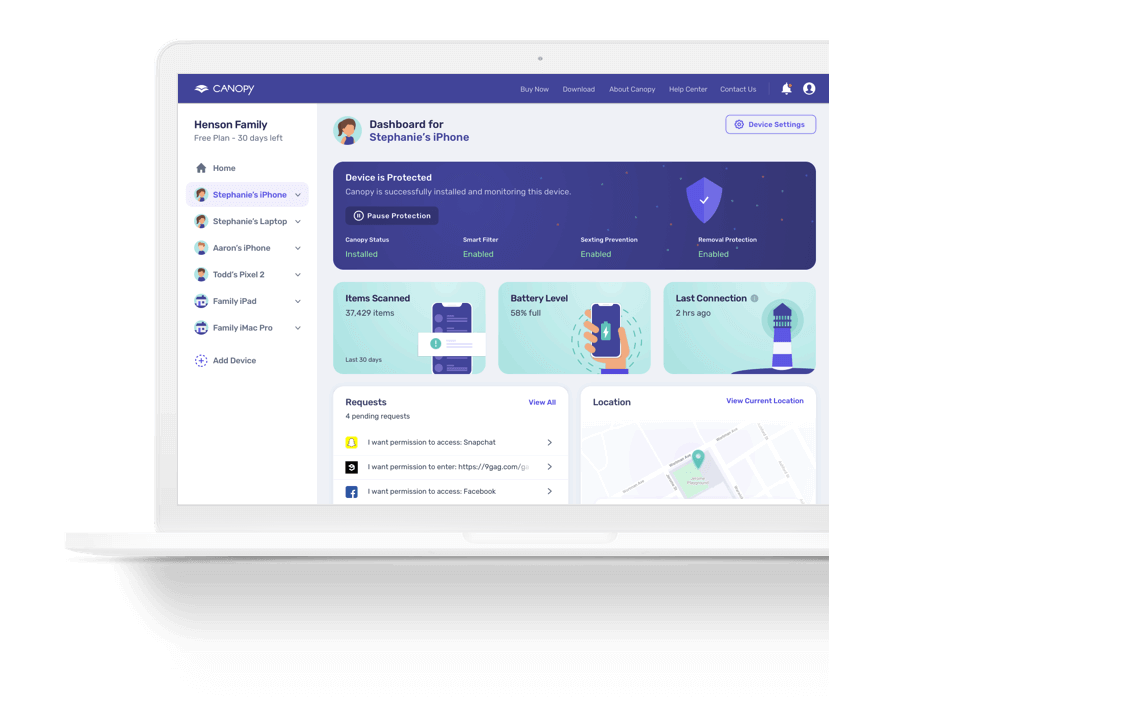

2. Add protection that covers the whole device. Relying on any single app’s parental controls is like locking the front door but leaving every window open. If explicit content comes through a browser, a messaging app, or a different platform, your child is exposed.

A device-level content filter like Canopy works across all websites and social platforms on the device, using AI to analyze images and text and filter explicit content before it reaches your child. That is exactly the kind of gap these trials exposed: harmful material sliding past a single platform’s safety net.

“The platforms promised parents they had it covered. Two juries just said otherwise,” Litwin added. “Device-level filtering gives families a layer of protection that doesn’t depend on Meta, Google, or any single company keeping its promises.”

Unlike accountability-style tools that monitor and report your child’s activity after the fact, Canopy blocks harmful content in real time. For younger kids especially, that distinction matters. You can see how Canopy compares to other internet filters here.

3. Have the hard conversation. During the New Mexico trial, teachers testified about sextortion schemes hitting their students: predators tricking or pressuring kids into sharing explicit images, then blackmailing them. In the California trial, the plaintiff described how compulsive social media use pulled her away from family, school, and her own sense of self-worth.

These are awkward conversations. They also save lives. Talk to your kids about what to do if a stranger messages them, why sharing personal photos with anyone is risky, and how to come to you if something goes wrong. Our guide to dealing with sextortion has specific steps you can walk through together.

4. Follow what happens next. This story is moving fast. The May bench trial in New Mexico, the federal trial starting this summer, and over 40 state AG lawsuits will all shape what protections eventually reach (or don’t reach) your child’s screen. Staying informed helps you spot the gaps.

20%

off

off

Don't rely on Meta's parental controls. Try Canopy today!

Get 20% off using code SAVE20

Get 20% off using code SAVE20

FAQs

What did Meta do that led to the $375 million verdict?

A New Mexico jury found that Meta broke the state’s consumer protection law by making misleading claims about the safety of Instagram, Facebook, and WhatsApp, and by failing to stop sexual predators from targeting children on those platforms. The case grew out of a 2023 undercover sting in which state agents posed as kids under 14 and documented the explicit material and predatory contact those accounts received.

What happened in the California Meta trial?

A Los Angeles jury found Meta and YouTube negligent in the design of their platforms and ruled that both companies failed to adequately warn users of the dangers. The jury awarded $6 million in damages, with Meta responsible for $4.2 million and Google for $1.8 million. This was a bellwether case expected to influence roughly 2,000 similar lawsuits by families and school districts.

Will Instagram and Facebook get safer for kids after this?

Not right away. Neither verdict forces Meta to change its products. The May bench trial in New Mexico will decide whether a court can order specific changes like age verification and predator removal. Even then, appeals could stretch the timeline significantly.

What age do you have to be to use Instagram or Facebook?

Meta says 13. The New Mexico case showed how poorly that rule is enforced. Kids create accounts every day with no real age check.

How can I protect my child right now?

Check which apps your child has and tighten privacy settings. Add a device-level content filter like Canopy, which blocks explicit content across all websites and social platforms instead of depending on any single platform. Talk to your kids about sextortion risks and make sure they know how to come to you if something feels wrong.

Are other states suing Meta?

Yes. Over 40 state attorneys general have filed lawsuits. A federal bellwether trial is set to begin in Oakland this summer, and additional state-level trials are expected later in 2026.